The Stuff of Thought is the Stuff of Experience

In this study (Fernandino et al., 2022), my colleagues and I addressed the question of how the contents of our thoughts – concepts, ideas, beliefs – are related to the physical world that we experience through our senses. This issue has long been debated among philosophers and cognitive scientists, a debate that can be traced back to the Ancient Greek philosophers Plato and Aristotle. According to Plato, humans are born with “innate ideas”, which are mental templates for all the sorts of things that we can name or recognize. These innate ideas were the soul’s memory of its prior existence in the “ideal world”. Plato argued that, since no two objects – say, trees – generate the exact same perceptual experience, it would be impossible for someone who comes across a particular tree for the first time to be able to recognize it as the same kind of thing as the trees they have encountered before unless they had an abstract template for the category "tree" somewhere in their minds. In the absence of such a template, any new object would be experienced as completely unique rather than a member of a kind. Innate ideas provided the abstract templates that allowed different things to be grouped together as a category. According to this view, as a child interacts with the world and comes across different things such as people, dogs, or trees, she would recognize each of them based on her innate, idealized template for that kind of thing. A similar view was later defended by the philosopher Renée Descartes, who argued for a sharp, qualitative distinction between the mortal sensing body and the immortal thinking soul.

Aristotle, on the other hand, argued that mental representations for natural kinds were not present from birth, but were learned from experience. By experiencing different trees, one gradually learns what is common to all of them (e.g., certain shapes, colors, sizes, etc.), and these commonalities, once they are abstracted away from particular experiences, provide the basis of the concept “tree”. This view was further developed by the 17th century philosopher John Locke, who famously stated that the human mind is born as a blank slate that is written on by experience.

Today, researchers are still split with respect to this issue. Of course, no cognitive neuroscientist seriously believes in Plato’s version of innate ideas or in Locke’s notion of tabula rasa, but a version of the debate persists. Some researchers argue that very basic natural kinds such as “animals”, “plants”, or “tools” have become encoded in our genome via natural selection over the course of human evolution, and that these a priori categories provide a basis for learning new concepts. They believe that concepts are represented in the brain in some kind of “symbolic” form, that is, the concept representation itself does not contain information about the sensory experiences through which they were learned. Just as computers represent information about images, sounds, or keystrokes using a symbolic digital code (i.e., the 0s and 1s in computer binary code), concepts would represent information about the world using a symbolic code that contains no information about visual, tactile, auditory, or other sensory experiences. Perceptual experiences of different trees would all be associated with the same conceptual symbol in the brain, thus allowing each of them to be recognized as an instance of the kind “tree”; however, the conceptual symbol for “tree” would contain no information about the sensory properties of those experiences. Because representations of this type would encode primarily information about category definitions, we call them “taxonomic” representations.

This view is opposed by researchers who argue that concepts are formed through gradual generalization over sensory experiences, and that they simply encode information about which features of experience (e.g., colors, shapes, textures, sizes, sounds, locations, smells, etc.) must be re-activated when the concept is recalled. This view, known as “embodied” or “situated” cognition, states that a concept is nothing but the condensed, abstracted record of the perceptual and emotional experiences that led to its formation. If that is true, then to think of the concept “apple” means to re-activate the sensory and affective representations that we learned to associate with that word, such as its typical shape, its typical color, its taste, its weight, and any emotions we have associated with it. There would be no symbolic code for concept representation that is dissociated from sensory and emotional information; the neural code for concepts would be a composite of the neural codes used for sensory and emotional representation.

More recently, some researchers have also proposed that, for word concepts (i.e., concepts that correspond to the meaning of a word, such as “dog”, “phone”, or “infinity”) one way in which the brain may represent them is by keeping track of how often specific words appear together during natural language use. The word “birthday”, for example, often occurs close to the words “years”, “old”, “party”, “cake”, etc., in conversation or in text. If the brain keeps tabs on how often a word co-occurs with every other word, it could, in principle, use that information to represent its meaning. In fact, that is how computer applications such as Google or Alexa represent word meaning. They can tell, for example, that “wolf” is more similar to “dog” than to “cat”, and that “peeling” goes with “orange” but not with “watermelon”. This type of information is called “distributional”, because it is based on how a word is “distributed” among the others in large bodies of text.

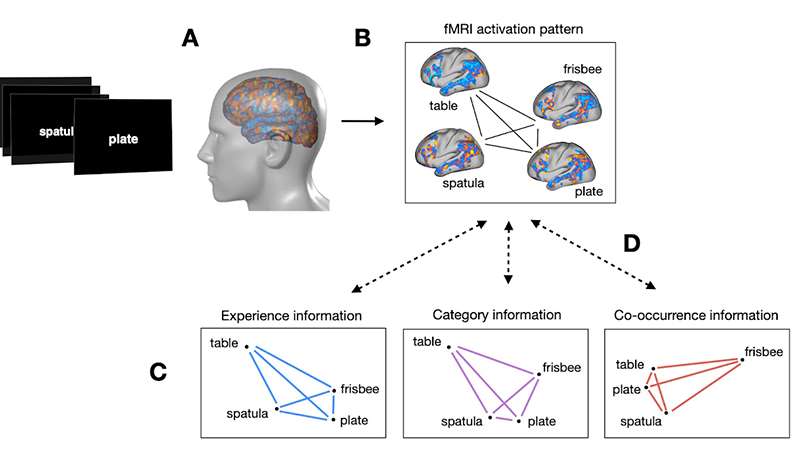

In our study, we attempted to find out how much of each type of information (taxonomic, experiential, and distributional) is encoded in the neural representations of word concepts. Our experimental approach is based on the fact that concepts can be more or less related to each other (e.g. “apple” is more related to “orange” than it is to “baseball”). However, the degree to which two concepts are related depends on the type of information used to define the relationship. For instance, based on perceptual information alone, “plate” is more related to “frisbee” than it is to “spatula”, even though “plate” and “spatula” are typically thought of as “kitchenware” and “frisbee” as a toy. And based on co-occurrence information alone, “plate” is more closely related to “table” than it is to either “spatula” or “frisbee”. If we can determine how related, or how similar, these concepts are to each other according to the brain, we can learn something about the kinds of information that are encoded in their neural representations. Thus, we set out to record the patterns of neural activation in the brain that correspond to different word concepts and determine their “similarity structure”, i.e., how similar they are to each other.

In our study, we attempted to find out how much of each type of information (taxonomic, experiential, and distributional) is encoded in the neural representations of word concepts. Our experimental approach is based on the fact that concepts can be more or less related to each other (e.g. “apple” is more related to “orange” than it is to “baseball”). However, the degree to which two concepts are related depends on the type of information used to define the relationship. For instance, based on perceptual information alone, “plate” is more related to “frisbee” than it is to “spatula”, even though “plate” and “spatula” are typically thought of as “kitchenware” and “frisbee” as a toy. And based on co-occurrence information alone, “plate” is more closely related to “table” than it is to either “spatula” or “frisbee”. If we can determine how related, or how similar, these concepts are to each other according to the brain, we can learn something about the kinds of information that are encoded in their neural representations. Thus, we set out to record the patterns of neural activation in the brain that correspond to different word concepts and determine their “similarity structure”, i.e., how similar they are to each other.

We used a neuroimaging technique known as functional magnetic resonance imaging (fMRI) to measure neural activity throughout the brain while participants read each word on the screen. We did that for 524 different word concepts belonging to several categories, including animals, plants, tools, vehicles, places, human occupations, social events, natural events, and abstract concepts (across 2 separate experiments). This figure illustrates how we used the brain activation patterns induced by the words to determine the similarity structure of concept representations:

A. For each word presented, we recorded the corresponding level of neural activation at each point in the brain. Each word induces a unique pattern of activation across the brain.

B. We computed the similarity between the activation patterns generated by different words for all possible pairs of words. This is the “neural similarity structure” for word concepts.

C. For each type of information, we computed the predicted similarities for all pairs of words, resulting in a predicted similarity structure for each. (We actually used two different models for each information type, so there was a total of six predicted similarity structures.)

D. We then evaluated how alike the neural similarity structure was to each predicted similarity structure.

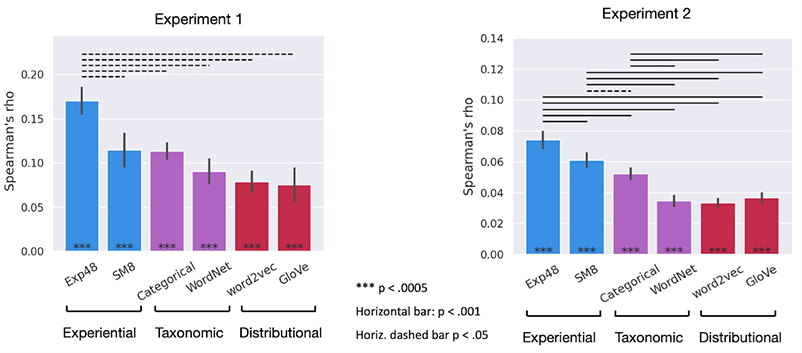

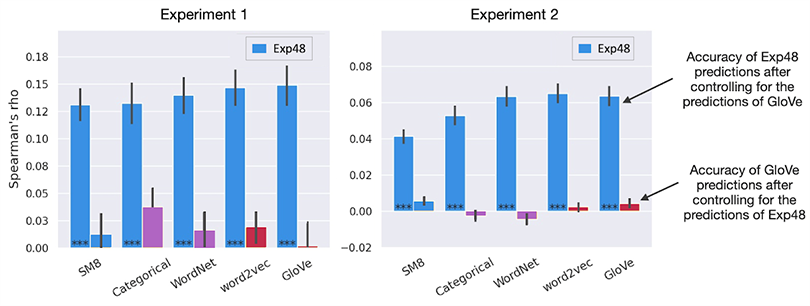

The correlation (i.e., match) between each predicted similarity structure and the neural similarity structure is shown in the bar graph:

The stars (***) on each bar show that all predicted similarity structures matched the neural similarity structure highly above chance level (p < .0005), meaning that all three types of information appear be encoded in the neural representation of word concepts. The horizontal lines above the bars indicate statistically significant differences between them, showing that the similarity structure predicted by both experiential models was more like the neural similarity structure of concepts than any of the other models’ similarity structures. This shows that experiential information is more important than taxonomic or distributional information for concept representation.

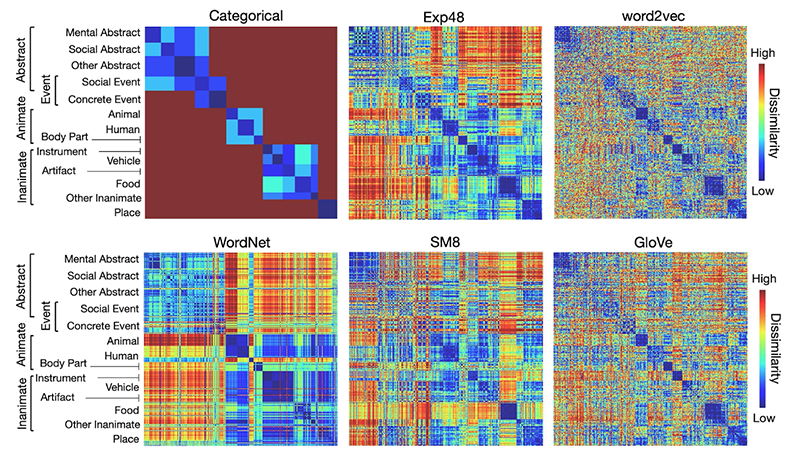

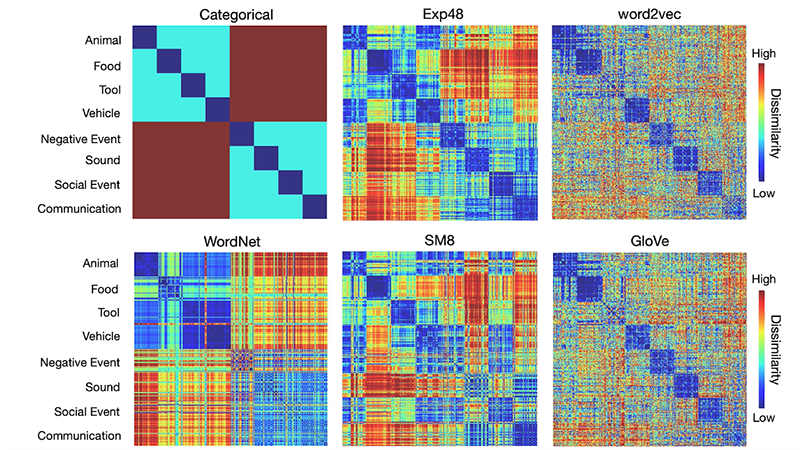

Importantly, however, while the predicted similarity structure from each model is unique, predictions from different models still resemble each other to some extent. The resemblance is visible when we depict the similarity structure predicted by each model as a color-coded matrix, as in the figures below. Each row and each column represents the dissimilarity between one concept and all the others. The diagonal shows the dissimilarity between each concept and itself, which is always zero. Concepts are grouped according to taxonomic categories just for visualization purposes.

Experiment 1 (300 concepts)

Experiment 2 (320 concepts)

Therefore, to be able to determine what kinds of information are actually encoded in neural concept representations, we need to evaluate how well each model predicts the neural similarity structure after controlling for its similarity to the other models. The results are shown in the chart below, where the tall blue bars show how well the winning model (the experiential model Exp48) predicted the neural similarity structure of concepts after we controlled for the effect of each of the other models. The small bars show how well each of the other models did after we controlled for the effect of Exp48.

These results show that, once we take into account the similarities between models, only the experiential model Exp48 predicts the similarity structure of concepts above chance level. The other models only predicted the neural similarity structure of concepts to the extent that their predictions matched Exp48’s predictions. This is strong indication that concept representation relies primarily on experiential information, and that neither taxonomic nor distributional information, on their own, contributes much. We conclude that the patterns of neural activity that represent conceptual knowledge in the brain encode sensory-motor and affective information about each concept, contrary to the idea that concept representations are independent of sensory-motor experience. In other words, with respect to the relationship between conceptual thought and the senses, Aristotle and Locke were on the right track, Plato and Descartes were dead wrong.

This finding contributes to our understanding of the neural mechanisms underlying language learning, language comprehension, and semantic language impairments caused by stroke and neurodegenerative disorders. It also paves the way for further research aimed at decoding conscious thought from brain activity, which would allow patients with phonological language disorders (who can neither speak nor write) or locked-in syndrome (who are completely paralyzed) to communicate via brain-computer interface technology.

Study Summary & Support

Summary of Fernandino, L., Tong, J.-Q., Conant, L. L., Humphries, C. J., and Binder, J. R. (2022). Decoding the information structure underlying the neural representation of concepts. Proceedings of the National Academy of Sciences of the United States of America, 119(6), e2108091119

The study was co-authored by Jia-Qing Tong, Colin Humphries, Lisa Conant, and Jeffrey Binder, with generous support from the Department of Neurology of the Medical College of Wisconsin, the National Institutes of Health, and the Advancing a Healthier Wisconsin Endowment. We thank Lizzie Awe, Jed Mathis, and the study participants for their help.